If data scientists build models and software engineers build products, MLOps engineers make sure models actually work in production. And right now, companies can't hire them fast enough. LinkedIn data shows MLOps engineer postings grew 340% between 2024 and 2026, making it the fastest-growing job title in AI. Median total compensation: $165K at mid-level, climbing to $220K+ for senior roles at companies like Netflix, Stripe, and Databricks.

What MLOps Engineers Do

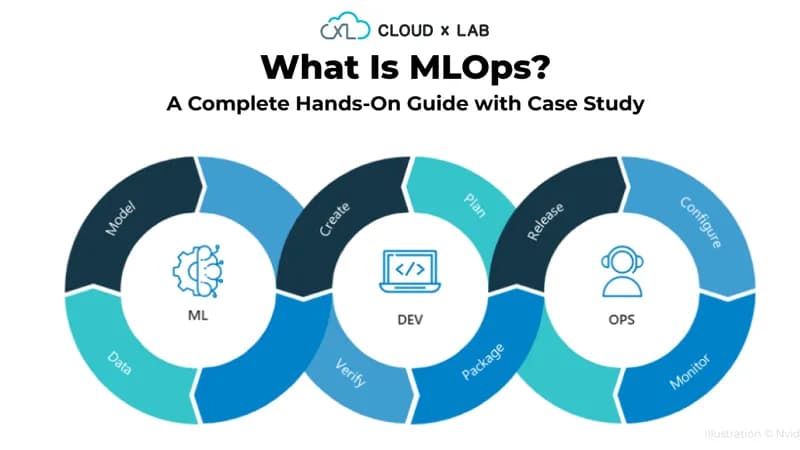

MLOps engineers own the full lifecycle of ML models in production. Their day-to-day responsibilities span infrastructure, automation, and reliability:

- Build training pipelines - Orchestrate data preprocessing, model training, and hyperparameter tuning using tools like Apache Airflow, Kubeflow Pipelines, or Dagster

- Deploy models at scale - Package models into containers, serve them via REST/gRPC APIs, and manage autoscaling with Kubernetes or serverless platforms like AWS SageMaker

- Monitor production models - Track model performance, detect data drift and concept drift, and trigger automated retraining when quality degrades

- Manage experiment tracking - Maintain reproducibility across hundreds of experiments using MLflow, Weights & Biases, or Neptune

- Enforce governance - Version control models and datasets, audit model decisions for compliance, and maintain lineage tracking

Why Demand Is Exploding

Companies learned the hard way that building a great model is 20% of the work - putting it into production reliably is the other 80%. According to Gartner, 85% of ML models never make it to production. The bottleneck isn't model accuracy - it's infrastructure, reliability, and operational maturity. That's the gap MLOps engineers fill.

The demand multiplier: every company deploying AI needs MLOps, regardless of industry. Financial services, healthcare, retail, and manufacturing are all building ML platforms. Google, Amazon, and Microsoft are scaling their ML platform teams by hundreds of engineers per quarter. Startups with even one deployed model soon discover they need dedicated MLOps support.

The Complete Skill Stack

Core Infrastructure

- Python - The lingua franca of ML. Proficiency in packaging, testing, and production Python code (not just Jupyter notebooks)

- Cloud platforms - AWS SageMaker, GCP Vertex AI, or Azure ML. Most roles require deep expertise in at least one

- Docker + Kubernetes - Container orchestration is non-negotiable. Understanding Helm charts, resource limits, and GPU scheduling is table stakes

- CI/CD for ML - GitHub Actions, GitLab CI, or Jenkins pipelines that test data, validate models, and deploy seamlessly

ML-Specific Tooling

- Experiment tracking - MLflow (open-source standard), Weights & Biases (best UI), Neptune (good for teams)

- Feature stores - Feast (open-source), Tecton, or Databricks Feature Store for managing and serving ML features consistently

- Model serving - Seldon Core, BentoML, TorchServe, or TensorFlow Serving. Understanding latency optimization and batching strategies

- Monitoring - Evidently AI, WhyLabs, or Arize for drift detection, performance dashboards, and alerting

- Data versioning - DVC (Data Version Control) or LakeFS for reproducible datasets and experiments

Salary Breakdown by Level and Location

- Junior MLOps Engineer (0-2 years) - $110K-$140K. Focus on pipeline maintenance, monitoring, and basic deployment tasks.

- Mid-Level (3-5 years) - $145K-$185K. Designing platform architecture, leading migration projects, and mentoring juniors.

- Senior (5-8 years) - $185K-$240K. Setting technical direction, cross-team platform strategy, and vendor evaluation.

- Staff / Principal - $250K-$350K+ total comp. Found at companies like Netflix, Spotify, Airbnb, and top-tier startups.

Location premiums: San Francisco (+20%), New York (+15%), Seattle (+12%). Remote roles typically benchmark to national averages, though top-tier companies increasingly offer location-agnostic comp.

How to Break In

The most common entry paths into MLOps:

- DevOps/SRE → MLOps: If you already know Kubernetes, Terraform, and CI/CD, add ML framework knowledge and experiment tracking. This is the fastest path - 3-6 months of targeted learning.

- Data Scientist → MLOps: If you build models but want to deploy them properly, learn Docker, cloud platforms, and production engineering practices.

- Software Engineer → MLOps: Add ML fundamentals (PyTorch basics, model evaluation) to your existing engineering skills.

Our catalog of 900+ expert-rated courses includes dedicated MLOps tracks covering the exact tool stack employers want - from Kubernetes fundamentals through advanced model serving and monitoring - rated by working MLOps engineers at companies actively hiring for these roles.